* docs: update readme * docs: update readme * feat: training codes * feat: data preprocess * docs: release training

MuseTalk

MuseTalk: Real-Time High-Fidelity Video Dubbing via Spatio-Temporal Sampling

Yue Zhang*, Zhizhou Zhong*, Minhao Liu*, Zhaokang Chen, Bin Wu†, Yubin Zeng, Chao Zhan, Junxin Huang, Yingjie He, Wenjiang Zhou (*Equal Contribution, †Corresponding Author, benbinwu@tencent.com)

Lyra Lab, Tencent Music Entertainment

github huggingface space Technical report

We introduce MuseTalk, a real-time high quality lip-syncing model (30fps+ on an NVIDIA Tesla V100). MuseTalk can be applied with input videos, e.g., generated by MuseV, as a complete virtual human solution.

🔥 Updates

We're excited to unveil MuseTalk 1.5. This version (1) integrates training with perceptual loss, GAN loss, and sync loss, significantly boosting its overall performance. (2) We've implemented a two-stage training strategy and a spatio-temporal data sampling approach to strike a balance between visual quality and lip-sync accuracy. Learn more details here. The inference code and model weights of MuseTalk 1.5 are now available, with the training code set to be released soon. Stay tuned! 🚀

Overview

MuseTalk is a real-time high quality audio-driven lip-syncing model trained in the latent space of ft-mse-vae, which

- modifies an unseen face according to the input audio, with a size of face region of

256 x 256. - supports audio in various languages, such as Chinese, English, and Japanese.

- supports real-time inference with 30fps+ on an NVIDIA Tesla V100.

- supports modification of the center point of the face region proposes, which SIGNIFICANTLY affects generation results.

- checkpoint available trained on the HDTF and private dataset.

News

- [03/28/2025] 📣 We are thrilled to announce the release of our 1.5 version. This version is a significant improvement over the 1.0 version, with enhanced clarity, identity consistency, and precise lip-speech synchronization. We update the technical report with more details.

- [10/18/2024] We release the technical report. Our report details a superior model to the open-source L1 loss version. It includes GAN and perceptual losses for improved clarity, and sync loss for enhanced performance.

- [04/17/2024] We release a pipeline that utilizes MuseTalk for real-time inference.

- [04/16/2024] Release Gradio demo on HuggingFace Spaces (thanks to HF team for their community grant)

- [04/02/2024] Release MuseTalk project and pretrained models.

Model

MuseTalk was trained in latent spaces, where the images were encoded by a freezed VAE. The audio was encoded by a freezed

whisper-tiny model. The architecture of the generation network was borrowed from the UNet of the stable-diffusion-v1-4, where the audio embeddings were fused to the image embeddings by cross-attention.

Note that although we use a very similar architecture as Stable Diffusion, MuseTalk is distinct in that it is NOT a diffusion model. Instead, MuseTalk operates by inpainting in the latent space with a single step.

Cases

TODO:

- trained models and inference codes.

- Huggingface Gradio demo.

- codes for real-time inference.

- technical report.

- a better model with updated technical report.

- realtime inference code for 1.5 version.

- training and data preprocessing codes.

- always welcome to submit issues and PRs to improve this repository! 😊

Getting Started

We provide a detailed tutorial about the installation and the basic usage of MuseTalk for new users:

Third party integration

Thanks for the third-party integration, which makes installation and use more convenient for everyone. We also hope you note that we have not verified, maintained, or updated third-party. Please refer to this project for specific results.

ComfyUI

Installation

To prepare the Python environment and install additional packages such as opencv, diffusers, mmcv, etc., please follow the steps below:

Build environment

We recommend a python version >=3.10 and cuda version =11.7. Then build environment as follows:

pip install -r requirements.txt

mmlab packages

pip install --no-cache-dir -U openmim

mim install mmengine

mim install "mmcv>=2.0.1"

mim install "mmdet>=3.1.0"

mim install "mmpose>=1.1.0"

Download ffmpeg-static

Download the ffmpeg-static and

export FFMPEG_PATH=/path/to/ffmpeg

for example:

export FFMPEG_PATH=/musetalk/ffmpeg-4.4-amd64-static

Download weights

You can download weights manually as follows:

- Download our trained weights.

# !pip install -U "huggingface_hub[cli]"

export HF_ENDPOINT=https://hf-mirror.com

huggingface-cli download TMElyralab/MuseTalk --local-dir models/

- Download the weights of other components:

Finally, these weights should be organized in models as follows:

./models/

├── musetalk

│ └── musetalk.json

│ └── pytorch_model.bin

├── musetalkV15

│ └── musetalk.json

│ └── unet.pth

├── syncnet

│ └── latentsync_syncnet.pt

├── dwpose

│ └── dw-ll_ucoco_384.pth

├── face-parse-bisent

│ ├── 79999_iter.pth

│ └── resnet18-5c106cde.pth

├── sd-vae-ft-mse

│ ├── config.json

│ └── diffusion_pytorch_model.bin

└── whisper

├── config.json

├── pytorch_model.bin

└── preprocessor_config.json

Quickstart

Inference

We provide inference scripts for both versions of MuseTalk:

MuseTalk 1.5 (Recommended)

# Run MuseTalk 1.5 inference

sh inference.sh v1.5 normal

MuseTalk 1.0

# Run MuseTalk 1.0 inference

sh inference.sh v1.0 normal

The inference script supports both MuseTalk 1.5 and 1.0 models:

- For MuseTalk 1.5: Use the command above with the V1.5 model path

- For MuseTalk 1.0: Use the same script but point to the V1.0 model path

The configuration file configs/inference/test.yaml contains the inference settings, including:

video_path: Path to the input video, image file, or directory of imagesaudio_path: Path to the input audio file

Note: For optimal results, we recommend using input videos with 25fps, which is the same fps used during model training. If your video has a lower frame rate, you can use frame interpolation or convert it to 25fps using ffmpeg.

Real-time Inference

For real-time inference, use the following command:

# Run real-time inference

sh inference.sh v1.5 realtime # For MuseTalk 1.5

# or

sh inference.sh v1.0 realtime # For MuseTalk 1.0

The real-time inference configuration is in configs/inference/realtime.yaml, which includes:

preparation: Set toTruefor new avatar preparationvideo_path: Path to the input videobbox_shift: Adjustable parameter for mouth region controlaudio_clips: List of audio clips for generation

Important notes for real-time inference:

- Set

preparationtoTruewhen processing a new avatar - After preparation, the avatar will generate videos using audio clips from

audio_clips - The generation process can achieve 30fps+ on an NVIDIA Tesla V100

- Set

preparationtoFalsefor generating more videos with the same avatar

For faster generation without saving images, you can use:

python -m scripts.realtime_inference --inference_config configs/inference/realtime.yaml --skip_save_images

Training

Data Preparation

To train MuseTalk, you need to prepare your dataset following these steps:

-

Place your source videos

For example, if you're using the HDTF dataset, place all your video files in

./dataset/HDTF/source. -

Run the preprocessing script

python -m scripts.preprocess --config ./configs/training/preprocess.yamlThis script will:

- Extract frames from videos

- Detect and align faces

- Generate audio features

- Create the necessary data structure for training

Training Process

After data preprocessing, you can start the training process:

-

First Stage

sh train.sh stage1 -

Second Stage

sh train.sh stage2

Configuration Adjustment

Before starting the training, you should adjust the configuration files according to your hardware and requirements:

-

GPU Configuration (

configs/training/gpu.yaml):gpu_ids: Specify the GPU IDs you want to use (e.g., "0,1,2,3")num_processes: Set this to match the number of GPUs you're using

-

Stage 1 Configuration (

configs/training/stage1.yaml):data.train_bs: Adjust batch size based on your GPU memory (default: 32)data.n_sample_frames: Number of sampled frames per video (default: 1)

-

Stage 2 Configuration (

configs/training/stage2.yaml):random_init_unet: Must be set toFalseto use the model from stage 1data.train_bs: Smaller batch size due to high GPU memory cost (default: 2)data.n_sample_frames: Higher value for temporal consistency (default: 16)solver.gradient_accumulation_steps: Increase to simulate larger batch sizes (default: 8)

GPU Memory Requirements

Based on our testing on a machine with 8 NVIDIA H20 GPUs:

Stage 1 Memory Usage

| Batch Size | Gradient Accumulation | Memory per GPU | Recommendation |

|---|---|---|---|

| 8 | 1 | ~32GB | |

| 16 | 1 | ~45GB | |

| 32 | 1 | ~74GB | ✓ |

Stage 2 Memory Usage

| Batch Size | Gradient Accumulation | Memory per GPU | Recommendation |

|---|---|---|---|

| 1 | 8 | ~54GB | |

| 2 | 2 | ~80GB | |

| 2 | 8 | ~85GB | ✓ |

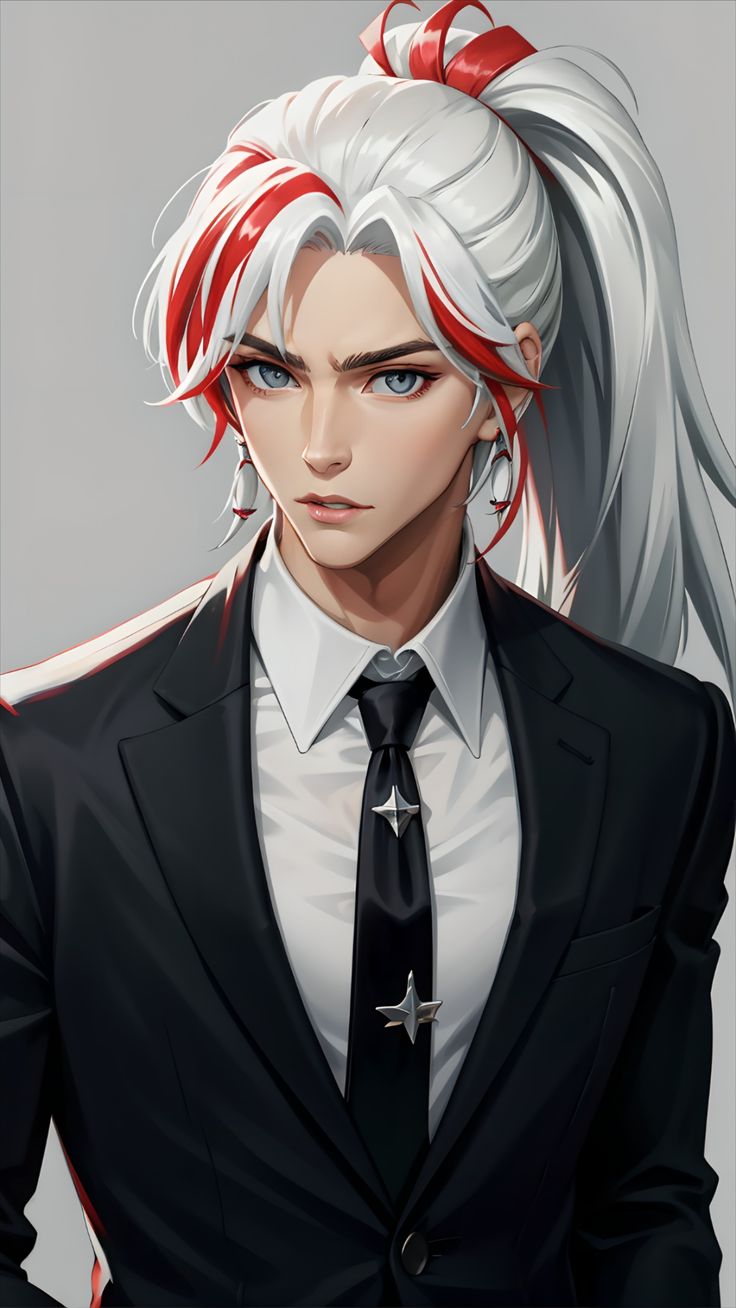

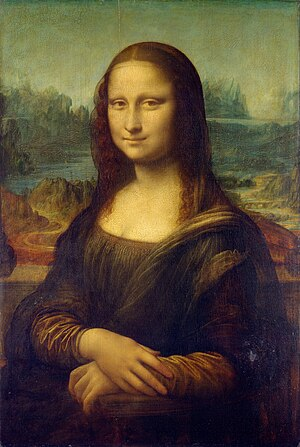

TestCases For 1.0

| Image | MuseV | +MuseTalk |

|

||

|

||

|

||

|

||

|

||

|

||

|

Use of bbox_shift to have adjustable results(For 1.0)

🔎 We have found that upper-bound of the mask has an important impact on mouth openness. Thus, to control the mask region, we suggest using the bbox_shift parameter. Positive values (moving towards the lower half) increase mouth openness, while negative values (moving towards the upper half) decrease mouth openness.

You can start by running with the default configuration to obtain the adjustable value range, and then re-run the script within this range.

For example, in the case of Xinying Sun, after running the default configuration, it shows that the adjustable value rage is [-9, 9]. Then, to decrease the mouth openness, we set the value to be -7.

python -m scripts.inference --inference_config configs/inference/test.yaml --bbox_shift -7

📌 More technical details can be found in bbox_shift.

Combining MuseV and MuseTalk

As a complete solution to virtual human generation, you are suggested to first apply MuseV to generate a video (text-to-video, image-to-video or pose-to-video) by referring this. Frame interpolation is suggested to increase frame rate. Then, you can use MuseTalk to generate a lip-sync video by referring this.

Acknowledgement

- We thank open-source components like whisper, dwpose, face-alignment, face-parsing, S3FD and LatentSync.

- MuseTalk has referred much to diffusers and isaacOnline/whisper.

- MuseTalk has been built on HDTF datasets.

Thanks for open-sourcing!

Limitations

-

Resolution: Though MuseTalk uses a face region size of 256 x 256, which make it better than other open-source methods, it has not yet reached the theoretical resolution bound. We will continue to deal with this problem.

If you need higher resolution, you could apply super resolution models such as GFPGAN in combination with MuseTalk. -

Identity preservation: Some details of the original face are not well preserved, such as mustache, lip shape and color.

-

Jitter: There exists some jitter as the current pipeline adopts single-frame generation.

Citation

@article{musetalk,

title={MuseTalk: Real-Time High-Fidelity Video Dubbing via Spatio-Temporal Sampling},

author={Zhang, Yue and Zhong, Zhizhou and Liu, Minhao and Chen, Zhaokang and Wu, Bin and Zeng, Yubin and Zhan, Chao and He, Yingjie and Huang, Junxin and Zhou, Wenjiang},

journal={arxiv},

year={2025}

}

Disclaimer/License

code: The code of MuseTalk is released under the MIT License. There is no limitation for both academic and commercial usage.model: The trained model are available for any purpose, even commercially.other opensource model: Other open-source models used must comply with their license, such aswhisper,ft-mse-vae,dwpose,S3FD, etc..- The testdata are collected from internet, which are available for non-commercial research purposes only.

AIGC: This project strives to impact the domain of AI-driven video generation positively. Users are granted the freedom to create videos using this tool, but they are expected to comply with local laws and utilize it responsibly. The developers do not assume any responsibility for potential misuse by users.